Artificial intelligence is often framed as a software revolution.

In reality, it is also an infrastructure revolution.

Every AI model relies on vast physical systems that include massive data centers, specialized chips, and enormous quantities of electricity.

As organizations deploy AI across customer support, analytics, automation, and decision-making, demand for computing power is accelerating at a staggering pace.

What many executives are only beginning to realize is that the real bottleneck in AI may not be algorithms.

It may be energy and infrastructure.

The Massive Compute Requirements of Modern AI

Modern artificial intelligence models require extraordinary computing resources.

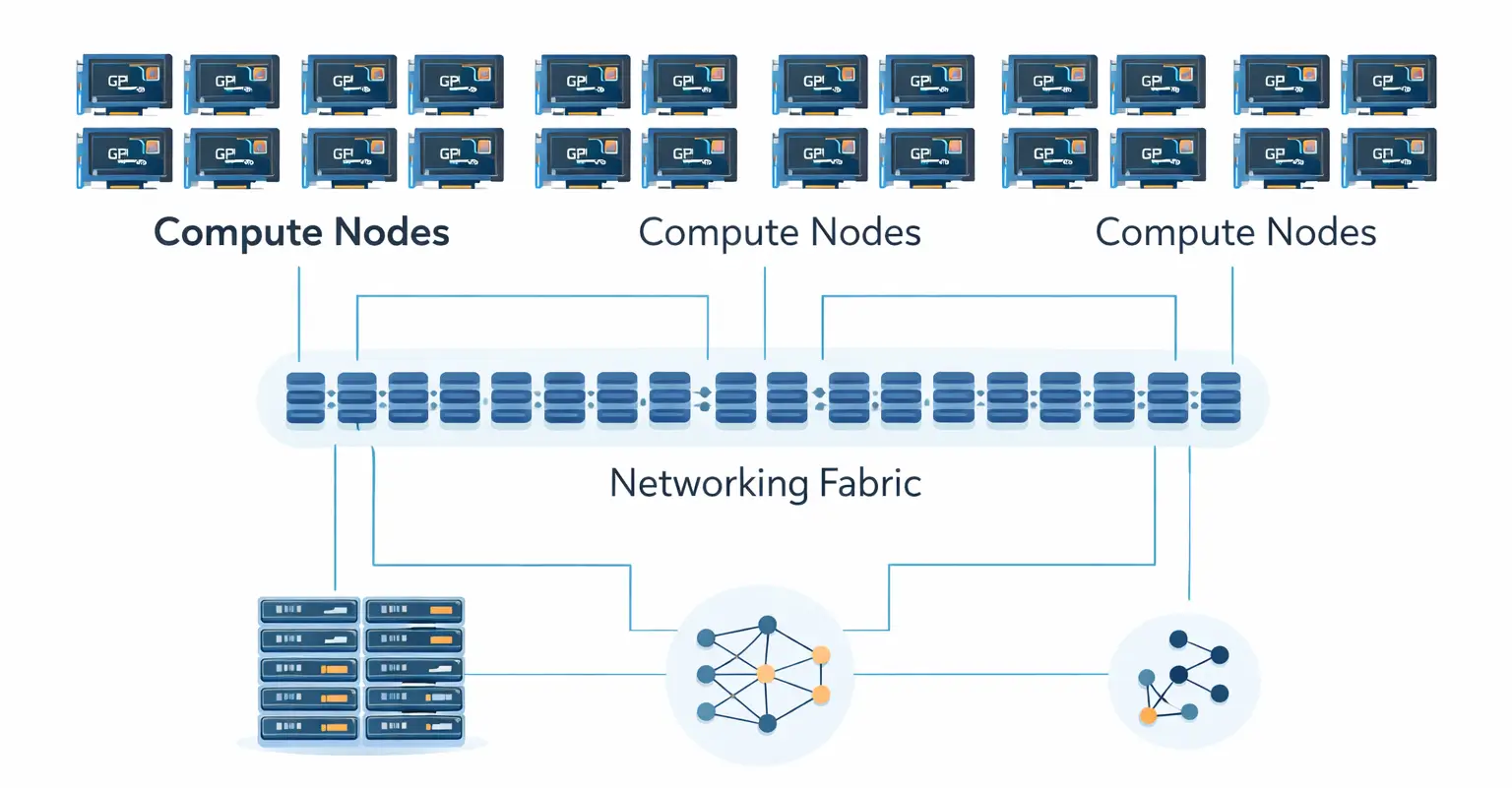

Training a large language model involves processing enormous datasets on massive GPU clusters that run continuously for weeks or months.

A single training run for a frontier model can require tens of thousands of high-performance GPUs.

These clusters are not typical servers. They are tightly interconnected systems designed specifically for high-performance parallel computation.

The result is unprecedented compute demand, and the trend shows no sign of slowing.

Why AI Consumes So Much Electricity

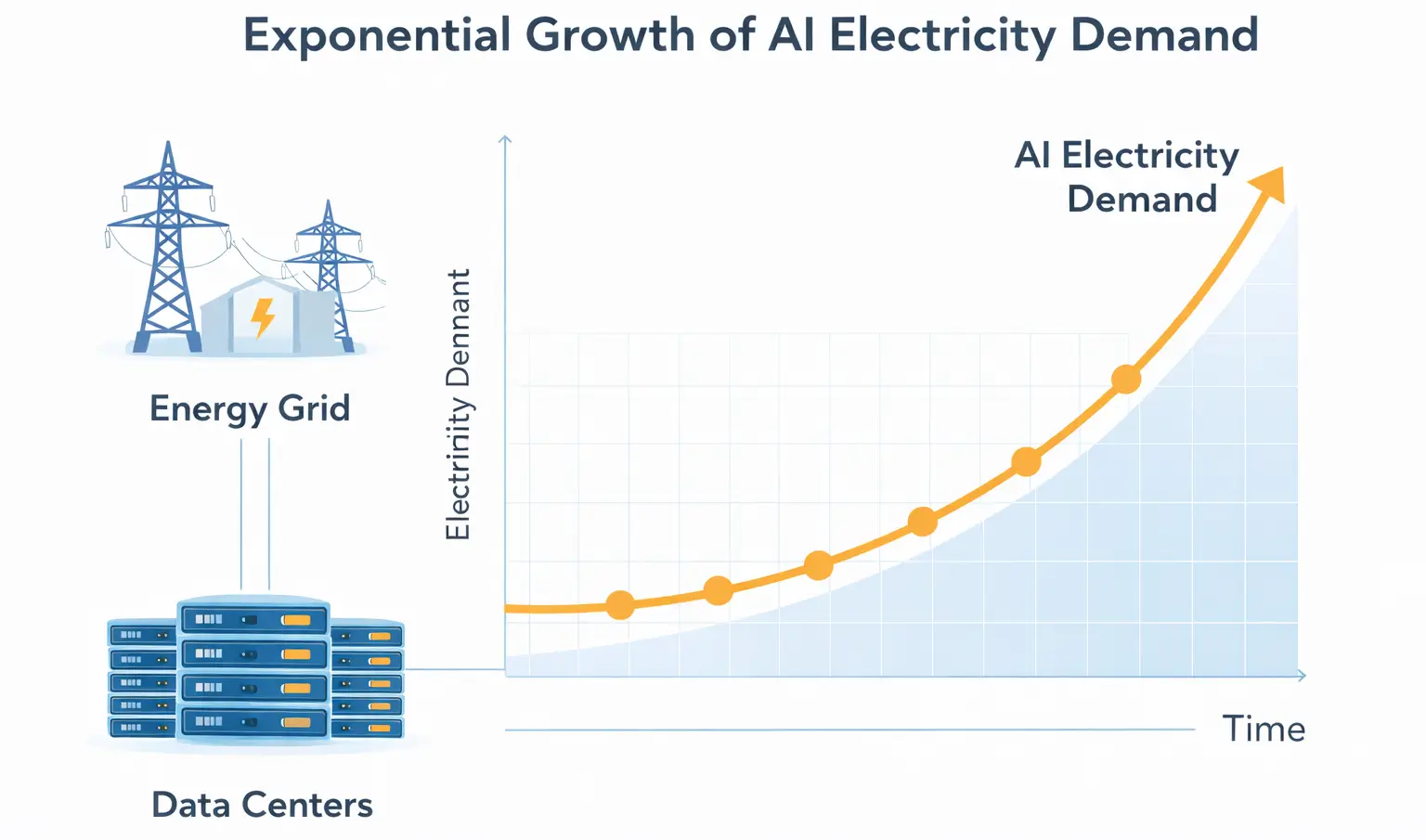

The energy consumption of AI workloads is driven by several factors.

First, modern GPUs are extremely power-intensive. A single high-performance AI GPU can consume hundreds of watts of power.

Multiply that across thousands or tens of thousands of GPUs in a cluster, and the electricity requirements become enormous.

Second, AI workloads often run continuously. Unlike traditional computing tasks that may be intermittent, AI model training can run nonstop for weeks.

Third, cooling infrastructure dramatically increases power consumption. Data centers housing dense GPU clusters generate large amounts of heat.

Cooling systems, air handling, and liquid cooling technologies require additional electricity.

Together, these factors create some of the most power-intensive computing environments ever built.

The Data Center Expansion Boom

To support AI workloads, technology companies are rapidly expanding global data center capacity.

New facilities are being built at a pace rarely seen in the history of computing.

These facilities require:

- massive land areas

- high-capacity power connections

- specialized networking infrastructure

Some new data centers are designed specifically for AI workloads, with power densities far higher than traditional cloud infrastructure.

In some regions, local power grids are struggling to keep up with demand.

Why AI Energy Demand Is Becoming a Geopolitical Issue

The growth of AI infrastructure is beginning to influence national policy.

Governments increasingly view AI capability as a strategic priority.

This means securing access to:

- semiconductor manufacturing

- advanced chips

- energy infrastructure

- data center capacity

Energy availability is becoming a critical factor.

Regions with abundant electricity and strong grid infrastructure may become global hubs for AI data centers.

The AI Compute Arms Race

At the center of the AI boom is a global competition for computing hardware.

Companies are racing to secure large quantities of specialized AI chips.

Demand for GPUs has surged as organizations deploy AI across industries.

At the same time, major technology companies are investing heavily in custom silicon designed specifically for AI workloads.

The result is a rapidly evolving hardware ecosystem.

Compute power is becoming one of the most valuable strategic resources in the technology sector.

How Infrastructure Constraints May Shape AI Adoption

Despite rapid innovation, infrastructure limitations may slow the pace of AI expansion.

Organizations deploying AI must consider:

- compute availability

- infrastructure costs

- energy consumption

- system efficiency

This is driving research into more efficient AI architectures and hardware.

Energy efficiency may become one of the most important factors in the next phase of AI development.

What This Means for Businesses

For business leaders, the infrastructure realities of AI have several implications.

AI adoption is not simply about software tools. It is about access to computing resources.

The cost of AI workloads can vary significantly depending on infrastructure efficiency.

Organizations that deploy AI strategically can gain significant advantages in productivity and automation.

However, poorly planned deployments may become expensive and difficult to scale.

Business leaders should approach AI adoption with a clear understanding of infrastructure requirements and long-term costs.

Conclusion

Artificial intelligence is transforming the global technology landscape.

Behind the software breakthroughs lies a vast and rapidly expanding infrastructure ecosystem.

Data centers, specialized chips, and energy systems are becoming the foundation of the AI economy.

As demand for compute and electricity continues to rise, these infrastructure constraints may shape the future of AI adoption.

For business leaders, understanding this shift is essential.

The companies that succeed with AI will not only adopt the technology. They will understand the infrastructure that powers it.