Artificial intelligence is being adopted across business faster than most organizations can fully understand.

Companies are integrating AI into customer support systems, marketing operations, financial analysis, product development, and internal workflows. In many cases, employees are experimenting with AI tools independently, while technology teams explore more advanced integrations.

The speed of this adoption is remarkable.

But a growing concern is beginning to emerge.

Governance is not keeping up.

Across industries, businesses are deploying AI systems without clear policies, oversight structures, or risk management strategies. As AI becomes more deeply embedded into everyday operations, this lack of governance may become one of the most significant challenges organizations face in the coming decade.

Understanding this issue early may help business leaders avoid serious operational, legal, and reputational risks.

AI Adoption Is Outpacing Oversight

Artificial intelligence is becoming one of the fastest adopted technologies in modern business history.

Organizations are using AI to:

- generate marketing content

- automate customer interactions

- analyze financial data

- summarize documents

- support internal decision making

- assist with software development

The benefits can be substantial. AI can reduce manual work, improve efficiency, and accelerate analysis.

However, adoption often happens organically rather than strategically.

Different departments may begin using different AI tools independently. Employees may rely on generative AI systems without understanding how the underlying models work. Data may be shared with third-party platforms without clear guidelines.

Over time, this creates an environment where AI is influencing business operations without clear oversight.

The Hidden Risks of Unmanaged AI

When AI systems operate without governance, several risks begin to emerge.

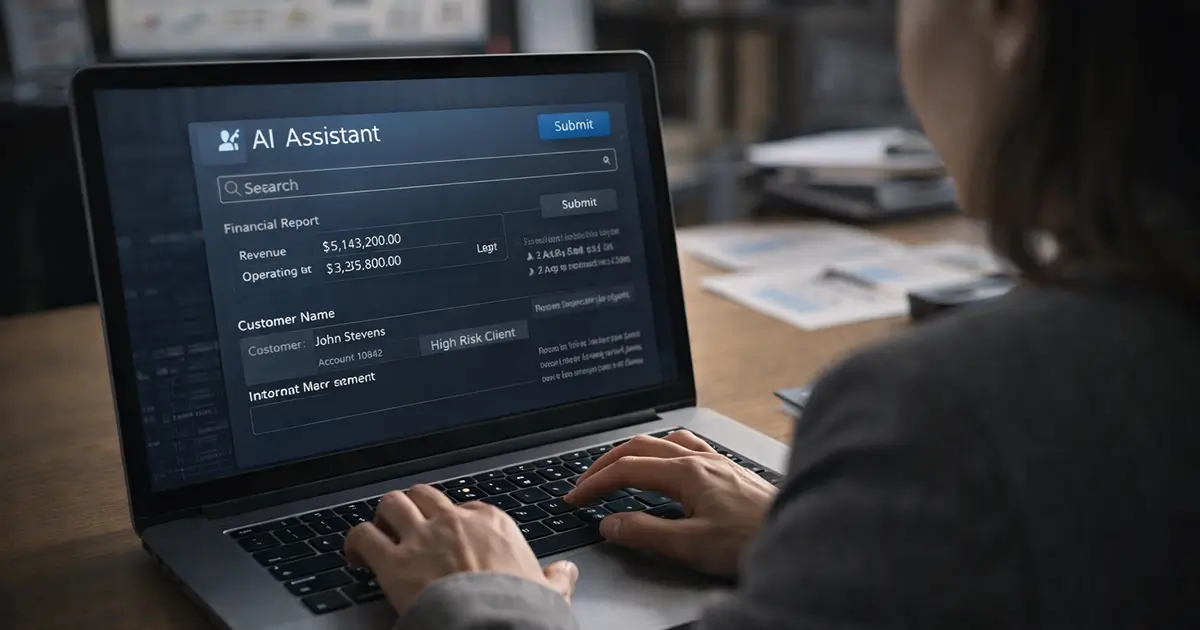

Data Exposure

Employees may inadvertently upload sensitive information into external AI systems.

Examples may include:

- customer data

- financial information

- internal documents

- proprietary business processes

Without policies in place, these risks can go unnoticed.

Inaccurate Outputs

Generative AI models sometimes produce incorrect information.

If AI outputs are used without verification, businesses may make decisions based on flawed analysis.

Operational Dependence

As AI tools become integrated into workflows, organizations may become dependent on systems they do not fully control or understand.

This creates strategic risk.

Legal and Compliance Exposure

Regulators are increasingly focused on how companies deploy AI.

Organizations that lack governance structures may face future compliance challenges.

Why Most Companies Lack AI Governance

Despite these risks, most businesses have not implemented formal AI governance strategies.

Several factors contribute to this gap.

AI Adoption Is Bottom-Up

In many companies, AI adoption begins with individual employees experimenting with tools.

By the time leadership becomes aware of widespread use, the technology may already be deeply embedded.

Governance Frameworks Are Still Emerging

Unlike traditional IT governance, AI governance frameworks are still developing.

Organizations often struggle to determine:

- who owns AI oversight

- how models should be evaluated

- how risks should be assessed

- what policies should be implemented

Leadership Awareness Is Limited

Many executives understand that AI is important but have not yet fully explored its operational implications.

As a result, governance discussions are often delayed.

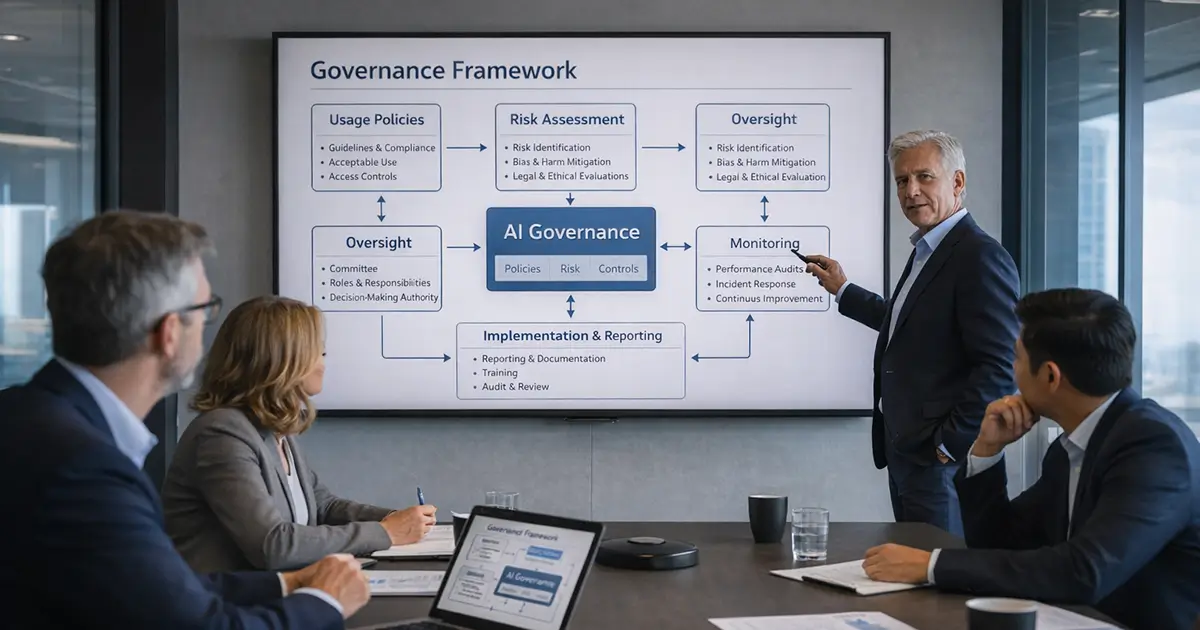

What AI Governance Actually Means

AI governance does not necessarily require complex bureaucratic systems.

At its core, governance simply means establishing clear oversight and accountability.

Effective AI governance typically includes several elements.

Usage Policies

Organizations should define:

- which AI tools are approved

- how employees may use them

- what types of data may be shared

- where human review is required

Risk Assessment

AI systems should be evaluated based on their potential impact.

For example:

- internal productivity tools carry low risk

- systems that influence customer decisions may carry higher risk

Oversight Structures

Many organizations create cross-functional oversight groups that include:

- technology leaders

- legal teams

- compliance specialists

- operations leaders

This ensures AI decisions are evaluated from multiple perspectives.

Monitoring and Auditing

AI systems should be periodically reviewed to ensure they are operating as expected.

This is particularly important for systems that influence critical business decisions.

The Governance Gap in Small and Mid-Sized Businesses

While large enterprises are beginning to invest in AI governance programs, smaller organizations often lack the resources to do the same.

This creates a significant governance gap.

Small and mid-sized businesses may adopt AI tools quickly because they are inexpensive and easy to use. However, these companies may not have:

- dedicated compliance teams

- formal technology governance structures

- internal AI expertise

As AI adoption grows, this gap may become increasingly problematic.

Organizations that fail to establish basic governance may encounter unexpected risks later.

How Governments and Regulators Are Responding

Governments around the world are beginning to recognize the governance challenge.

Several regulatory frameworks are emerging.

Examples include:

- the European Union AI Act

- national AI safety initiatives

- regulatory guidance on algorithm transparency

- industry standards for responsible AI

These efforts suggest that AI governance will become an increasingly important compliance issue.

Businesses that begin building governance capabilities early will likely be better prepared for future regulations.

What Business Leaders Should Do Now

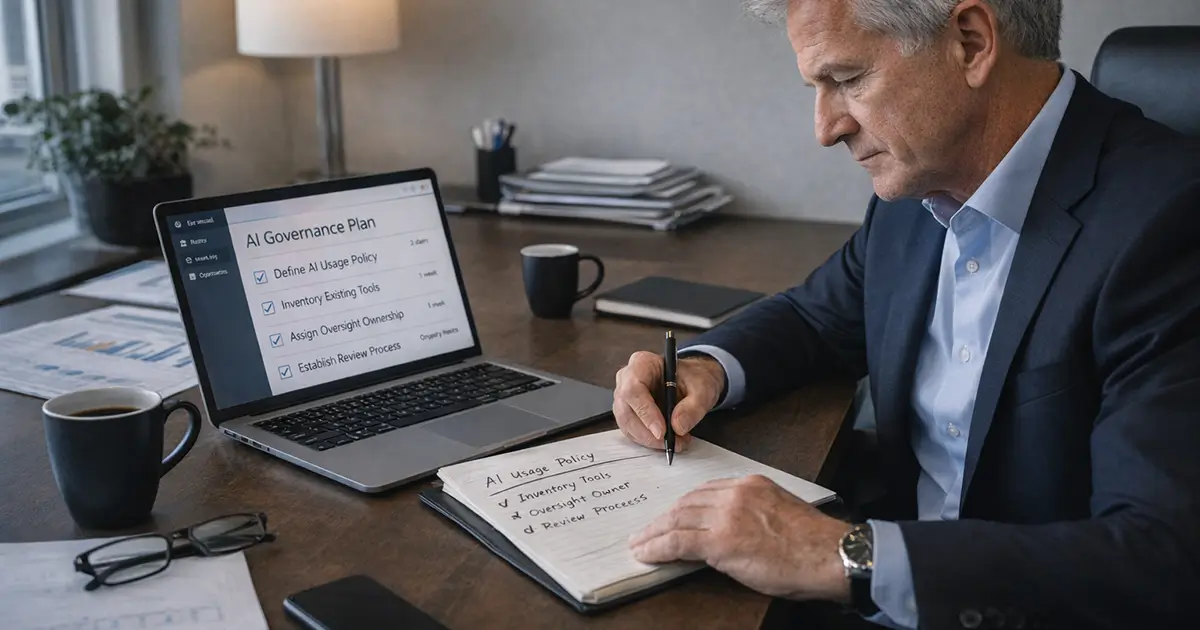

Organizations do not need to build complex governance programs immediately.

However, several practical steps can help reduce risk.

Develop an AI Usage Policy

Define how employees may use AI tools and what data may be shared.

Inventory AI Use

Identify which AI systems employees are currently using.

Assign Oversight Responsibility

Determine who is responsible for evaluating new AI tools.

Establish Review Processes

Create basic procedures for reviewing AI outputs when used in important decisions.

Monitor Regulatory Developments

AI regulations are evolving rapidly.

Staying informed will help organizations adapt more easily.

Governance Will Shape the Future of AI Adoption

Artificial intelligence has the potential to transform how businesses operate.

But as with any powerful technology, responsible oversight is essential.

The organizations that benefit most from AI will not necessarily be the ones that adopt it the fastest.

They will be the ones that adopt it thoughtfully.

Over the coming years, AI governance will likely move from an optional discussion to a core leadership responsibility.

Businesses that begin building governance practices today will be better positioned to use AI safely, effectively, and strategically.