Aligned with NIST AI Risk Management Framework (AI RMF 1.0)

Executive Purpose

Artificial intelligence introduces operational leverage and risk simultaneously.

This playbook establishes a structured governance model that enables SMBs to deploy AI responsibly, protect sensitive data, maintain accountability, and align AI initiatives with measurable business objectives.

The framework is organized around the four core NIST AI RMF functions:

- Govern

- Map

- Measure

- Manage

1. GOVERN

Organizational Oversight, Accountability, and Policy

This function establishes leadership responsibility and internal controls.

1.1 Leadership Accountability

Define:

- Executive owner of AI initiatives

- Cross-functional AI review group

- Approval authority for new AI tools

- Escalation path for risk issues

AI accountability must be explicit.

1.2 Acceptable Use Policy

Document:

- Approved AI tools

- Prohibited data categories

- Human review requirements

- Output validation expectations

- Data retention standards

All employees should acknowledge AI usage guidelines.

1.3 Risk Classification

Categorize AI use cases:

Low Risk

Marketing drafts, brainstorming, internal summaries

Moderate Risk

Customer communications, proposal generation, and reporting

High Risk

Financial decisions, HR matters, legal content, automated customer decisions

High-risk use cases require additional review and documentation.

1.4 Vendor Governance

Evaluate AI vendors based on:

- Data handling policies

- Model training disclosures

- Security certifications

- Integration capabilities

- Business stability

AI vendors should meet the same standards as financial or IT vendors.

2. MAP

Context, Intended Use, and Impact Assessment

This function defines how AI fits into your environment.

2.1 Business Context Documentation

For each AI initiative:

- Define business objective

- Document affected workflow

- Identify stakeholders

- Clarify decision impact

AI must be anchored to a specific operational context.

2.2 Data Mapping

Identify:

- Data sources used

- Sensitive data categories

- Data flow between systems

- Storage locations

- Third-party access

Understanding data movement reduces unintended exposure.

2.3 Risk Identification

Assess:

- Operational risk

- Reputational risk

- Compliance risk

- Bias and fairness risk

- Automation risk

Document risk exposure before deployment.

3. MEASURE

Performance, Reliability, and Risk Evaluation

AI systems must be evaluated continuously.

3.1 Output Quality Controls

Establish:

- Human review checkpoints

- Accuracy validation procedures

- Benchmark testing for repeat tasks

- Documentation of failure cases

Trust requires verification.

3.2 Performance Metrics

Track:

- Efficiency gains

- Error reduction

- Cost impact

- Revenue influence

- Adoption rate

Measurement ties AI to real business value.

3.3 Bias and Consistency Monitoring

For customer-facing or decision-support systems:

- Review outputs for fairness

- Monitor tone and compliance

- Evaluate consistency over time

Even SMBs should monitor unintended patterns.

4. MANAGE

Ongoing Monitoring and Continuous Improvement

AI governance is not a one-time activity.

4.1 Incident Response Protocol

Define:

- What constitutes an AI incident

- Reporting process

- Containment steps

- Communication plan

Examples include:

- Data exposure

- Inaccurate customer communication

- Biased output

- Incorrect financial summaries

4.2 Change Management

When updating tools or models:

- Reassess risk level

- Revalidate workflows

- Retrain staff if necessary

- Update documentation

Governance evolves with the system.

4.3 Periodic Review

Quarterly review should include:

- Tool inventory

- Risk reassessment

- ROI measurement

- Policy updates

- Vendor review

This prevents drift.

AI Governance Maturity Model for SMBs

Level 1: Informal Use

Ad hoc experimentation, no documentation

Level 2: Policy Awareness

Basic acceptable use guidelines

Level 3: Structured Oversight

Documented workflows and risk classification

Level 4: Integrated Governance

AI tied to KPIs with defined review cycles

Level 5: Strategic Differentiation

AI governance embedded into competitive strategy

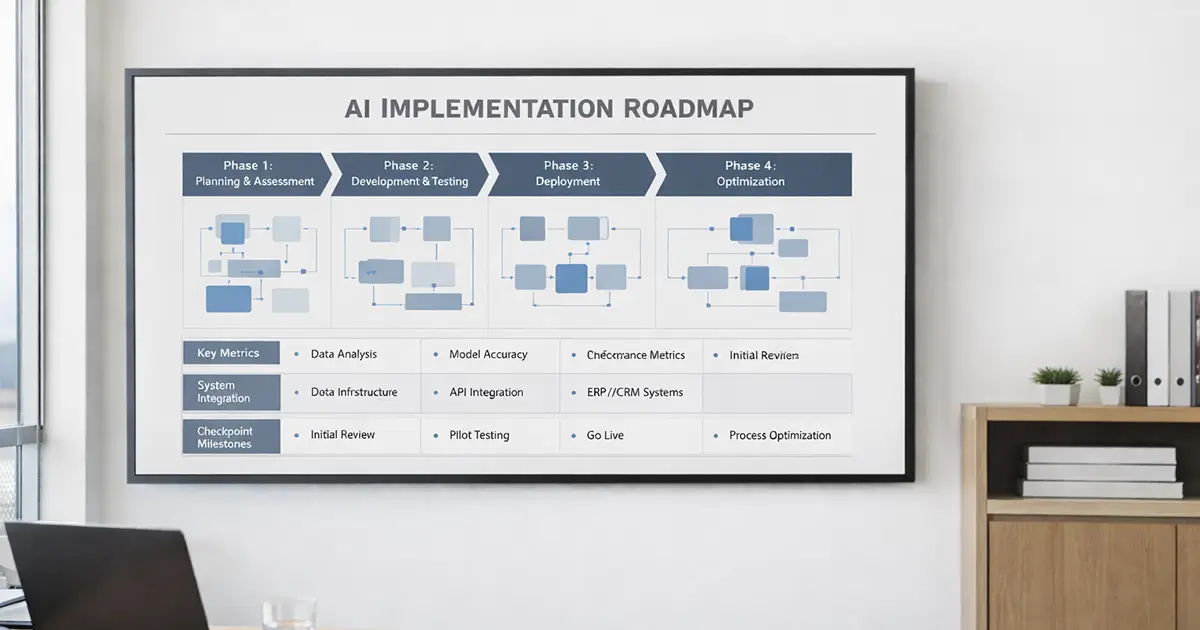

Implementation Roadmap for SMBs

Phase 1

Draft acceptable use policy

Inventory current AI tools

Assign executive owner

Phase 2

Map top three AI use cases

Classify risk levels

Implement review checkpoints

Phase 3

Establish a quarterly AI governance review

Track ROI metrics

Standardize training

Phase 4

Integrate AI performance metrics into strategic planning

AI governance is not about limiting innovation. It’s about protecting the organization while enabling disciplined growth.

SMBs that implement structured oversight early reduce risk, avoid fragmentation, and create sustainable operational advantage.